Compare commits

99 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

9b35f463ae | ||

|

|

7b74486519 | ||

|

|

b276b44ded | ||

|

|

3792649ed9 | ||

|

|

5f08956605 | ||

|

|

643d89ed7b | ||

|

|

8ca459593c | ||

|

|

ee4208cc19 | ||

|

|

f6330e4bb8 | ||

|

|

4f3cf10a5c | ||

|

|

aa1954e81e | ||

|

|

482597bd0e | ||

|

|

d4b90e681c | ||

|

|

fcf9e43c4c | ||

|

|

b8d8cd19d3 | ||

|

|

536c2d966c | ||

|

|

f49cb0a5ac | ||

|

|

cef6bb2340 | ||

|

|

4c03253b30 | ||

|

|

ed09bb7370 | ||

|

|

5c46a25173 | ||

|

|

d1538f00df | ||

|

|

afa4acbbfb | ||

|

|

d9a530485f | ||

|

|

b2ad70b028 | ||

|

|

f49aae8ab0 | ||

|

|

f6debcf799 | ||

|

|

edbc4f1359 | ||

|

|

5242f066e7 | ||

|

|

af186800fa | ||

|

|

2bff4a5f44 | ||

|

|

edb73d50cf | ||

|

|

3dc09d55fd | ||

|

|

079fb1b0f5 | ||

|

|

17b2aa8111 | ||

|

|

78d484cfe0 | ||

|

|

182e12817d | ||

|

|

9179d57cc9 | ||

|

|

9cb9b746d8 | ||

|

|

57a0089664 | ||

|

|

53f7608872 | ||

|

|

838ce5b45a | ||

|

|

e878b9557e | ||

|

|

6638d37d6f | ||

|

|

4c29e15329 | ||

|

|

48890a2121 | ||

|

|

e312d7e18c | ||

|

|

6c38aaebf3 | ||

|

|

18b0ac44bf | ||

|

|

b6749eff2f | ||

|

|

c73a103280 | ||

|

|

a5d194f730 | ||

|

|

6320436b88 | ||

|

|

87325927ed | ||

|

|

4435cdf5b6 | ||

|

|

b041654611 | ||

|

|

e833dcefd4 | ||

|

|

29680f0ee8 | ||

|

|

670329700c | ||

|

|

62e17af311 | ||

|

|

e3938a2c85 | ||

|

|

49ffc3d99e | ||

|

|

34bb1c34a2 | ||

|

|

b27a56a296 | ||

|

|

ecd7095123 | ||

|

|

d449513861 | ||

|

|

6709e229b3 | ||

|

|

00a8f16262 | ||

|

|

00d16f8615 | ||

|

|

c90676d2b2 | ||

|

|

b89c011ad5 | ||

|

|

c3de31aa88 | ||

|

|

b6df0be865 | ||

|

|

a89f84f76e | ||

|

|

5a916cc221 | ||

|

|

dcf421ff93 | ||

|

|

d655258311 | ||

|

|

f6d13d4318 | ||

|

|

d5350d7c30 | ||

|

|

c63fd04c92 | ||

|

|

64b418a551 | ||

|

|

36c9c84047 | ||

|

|

88b4aaa301 | ||

|

|

eac4c227c9 | ||

|

|

d5eb7d4a55 | ||

|

|

80b15e16e2 | ||

|

|

cfacedbb1a | ||

|

|

c3bab033ed | ||

|

|

524f9bd84f | ||

|

|

4658ede9d6 | ||

|

|

f7b463aca1 | ||

|

|

c1a6e92b1d | ||

|

|

eefb0e427e | ||

|

|

c23d81b740 | ||

|

|

6dac231040 | ||

|

|

6fd3e531c3 | ||

|

|

c1c05991cf | ||

|

|

ab378e14d1 | ||

|

|

c0f9bf9ef5 |

5

.gitignore

vendored

5

.gitignore

vendored

@@ -8,4 +8,7 @@ src/version.h

|

||||

dev-config/

|

||||

db/

|

||||

copy_executable_local.sh

|

||||

nostr_login_lite/

|

||||

nostr_login_lite/

|

||||

style_guide/

|

||||

nostr-tools

|

||||

|

||||

|

||||

6

.gitmodules

vendored

6

.gitmodules

vendored

@@ -1,3 +1,9 @@

|

||||

[submodule "nostr_core_lib"]

|

||||

path = nostr_core_lib

|

||||

url = https://git.laantungir.net/laantungir/nostr_core_lib.git

|

||||

[submodule "c_utils_lib"]

|

||||

path = c_utils_lib

|

||||

url = ssh://git@git.laantungir.net:2222/laantungir/c_utils_lib.git

|

||||

[submodule "text_graph"]

|

||||

path = text_graph

|

||||

url = ssh://git@git.laantungir.net:2222/laantungir/text_graph.git

|

||||

|

||||

@@ -2,4 +2,6 @@

|

||||

description: "Brief description of what this command does"

|

||||

---

|

||||

|

||||

Run build_and_push.sh, and supply a good git commit message.

|

||||

Run increment_and_push.sh, and supply a good git commit message. For example:

|

||||

|

||||

./increment_and_push.sh "Fixed the bug with nip05 implementation"

|

||||

1

.rooignore

Normal file

1

.rooignore

Normal file

@@ -0,0 +1 @@

|

||||

src/embedded_web_content.c

|

||||

32

07.md

32

07.md

@@ -1,32 +0,0 @@

|

||||

NIP-07

|

||||

======

|

||||

|

||||

`window.nostr` capability for web browsers

|

||||

------------------------------------------

|

||||

|

||||

`draft` `optional`

|

||||

|

||||

The `window.nostr` object may be made available by web browsers or extensions and websites or web-apps may make use of it after checking its availability.

|

||||

|

||||

That object must define the following methods:

|

||||

|

||||

```

|

||||

async window.nostr.getPublicKey(): string // returns a public key as hex

|

||||

async window.nostr.signEvent(event: { created_at: number, kind: number, tags: string[][], content: string }): Event // takes an event object, adds `id`, `pubkey` and `sig` and returns it

|

||||

```

|

||||

|

||||

Aside from these two basic above, the following functions can also be implemented optionally:

|

||||

```

|

||||

async window.nostr.nip04.encrypt(pubkey, plaintext): string // returns ciphertext and iv as specified in nip-04 (deprecated)

|

||||

async window.nostr.nip04.decrypt(pubkey, ciphertext): string // takes ciphertext and iv as specified in nip-04 (deprecated)

|

||||

async window.nostr.nip44.encrypt(pubkey, plaintext): string // returns ciphertext as specified in nip-44

|

||||

async window.nostr.nip44.decrypt(pubkey, ciphertext): string // takes ciphertext as specified in nip-44

|

||||

```

|

||||

|

||||

### Recommendation to Extension Authors

|

||||

To make sure that the `window.nostr` is available to nostr clients on page load, the authors who create Chromium and Firefox extensions should load their scripts by specifying `"run_at": "document_end"` in the extension's manifest.

|

||||

|

||||

|

||||

### Implementation

|

||||

|

||||

See https://github.com/aljazceru/awesome-nostr#nip-07-browser-extensions.

|

||||

32

AGENTS.md

32

AGENTS.md

@@ -27,7 +27,7 @@

|

||||

## Critical Integration Issues

|

||||

|

||||

### Event-Based Configuration System

|

||||

- **No traditional config files** - all configuration stored as kind 33334 Nostr events

|

||||

- **No traditional config files** - all configuration stored in config table

|

||||

- Admin private key shown **only once** on first startup

|

||||

- Configuration changes require cryptographically signed events

|

||||

- Database path determined by generated relay pubkey

|

||||

@@ -35,7 +35,7 @@

|

||||

### First-Time Startup Sequence

|

||||

1. Relay generates admin keypair and relay keypair

|

||||

2. Creates database file with relay pubkey as filename

|

||||

3. Stores default configuration as kind 33334 event

|

||||

3. Stores default configuration in config table

|

||||

4. **CRITICAL**: Admin private key displayed once and never stored on disk

|

||||

|

||||

### Port Management

|

||||

@@ -48,20 +48,30 @@

|

||||

- Schema version 4 with JSON tag storage

|

||||

- **Critical**: Event expiration filtering done at application level, not SQL level

|

||||

|

||||

### Configuration Event Structure

|

||||

### Admin API Event Structure

|

||||

```json

|

||||

{

|

||||

"kind": 33334,

|

||||

"content": "C Nostr Relay Configuration",

|

||||

"kind": 23456,

|

||||

"content": "base64_nip44_encrypted_command_array",

|

||||

"tags": [

|

||||

["d", "<relay_pubkey>"],

|

||||

["relay_description", "value"],

|

||||

["max_subscriptions_per_client", "25"],

|

||||

["pow_min_difficulty", "16"]

|

||||

["p", "<relay_pubkey>"]

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

**Configuration Commands** (encrypted in content):

|

||||

- `["relay_description", "My Relay"]`

|

||||

- `["max_subscriptions_per_client", "25"]`

|

||||

- `["pow_min_difficulty", "16"]`

|

||||

|

||||

**Auth Rule Commands** (encrypted in content):

|

||||

- `["blacklist", "pubkey", "hex_pubkey_value"]`

|

||||

- `["whitelist", "pubkey", "hex_pubkey_value"]`

|

||||

|

||||

**Query Commands** (encrypted in content):

|

||||

- `["auth_query", "all"]`

|

||||

- `["system_command", "system_status"]`

|

||||

|

||||

### Process Management

|

||||

```bash

|

||||

# Kill existing relay processes

|

||||

@@ -111,8 +121,8 @@ fuser -k 8888/tcp

|

||||

- Event filtering done at C level, not SQL level for NIP-40 expiration

|

||||

|

||||

### Configuration Override Behavior

|

||||

- CLI port override only affects first-time startup

|

||||

- After database creation, all config comes from events

|

||||

- CLI port override applies during first-time startup and existing relay restarts

|

||||

- After database creation, all config comes from events (but CLI overrides can still be applied)

|

||||

- Database path cannot be changed after initialization

|

||||

|

||||

## Non-Obvious Pitfalls

|

||||

|

||||

142

Dockerfile.alpine-musl

Normal file

142

Dockerfile.alpine-musl

Normal file

@@ -0,0 +1,142 @@

|

||||

# Alpine-based MUSL static binary builder for C-Relay

|

||||

# Produces truly portable binaries with zero runtime dependencies

|

||||

|

||||

ARG DEBUG_BUILD=false

|

||||

|

||||

FROM alpine:3.19 AS builder

|

||||

|

||||

# Re-declare build argument in this stage

|

||||

ARG DEBUG_BUILD=false

|

||||

|

||||

# Install build dependencies

|

||||

RUN apk add --no-cache \

|

||||

build-base \

|

||||

musl-dev \

|

||||

git \

|

||||

cmake \

|

||||

pkgconfig \

|

||||

autoconf \

|

||||

automake \

|

||||

libtool \

|

||||

openssl-dev \

|

||||

openssl-libs-static \

|

||||

zlib-dev \

|

||||

zlib-static \

|

||||

curl-dev \

|

||||

curl-static \

|

||||

sqlite-dev \

|

||||

sqlite-static \

|

||||

linux-headers \

|

||||

wget \

|

||||

bash

|

||||

|

||||

# Set working directory

|

||||

WORKDIR /build

|

||||

|

||||

# Build libsecp256k1 static (cached layer - only rebuilds if Alpine version changes)

|

||||

RUN cd /tmp && \

|

||||

git clone https://github.com/bitcoin-core/secp256k1.git && \

|

||||

cd secp256k1 && \

|

||||

./autogen.sh && \

|

||||

./configure --enable-static --disable-shared --prefix=/usr \

|

||||

CFLAGS="-fPIC" && \

|

||||

make -j$(nproc) && \

|

||||

make install && \

|

||||

rm -rf /tmp/secp256k1

|

||||

|

||||

# Build libwebsockets static with minimal features (cached layer)

|

||||

RUN cd /tmp && \

|

||||

git clone --depth 1 --branch v4.3.3 https://github.com/warmcat/libwebsockets.git && \

|

||||

cd libwebsockets && \

|

||||

mkdir build && cd build && \

|

||||

cmake .. \

|

||||

-DLWS_WITH_STATIC=ON \

|

||||

-DLWS_WITH_SHARED=OFF \

|

||||

-DLWS_WITH_SSL=ON \

|

||||

-DLWS_WITHOUT_TESTAPPS=ON \

|

||||

-DLWS_WITHOUT_TEST_SERVER=ON \

|

||||

-DLWS_WITHOUT_TEST_CLIENT=ON \

|

||||

-DLWS_WITHOUT_TEST_PING=ON \

|

||||

-DLWS_WITH_HTTP2=OFF \

|

||||

-DLWS_WITH_LIBUV=OFF \

|

||||

-DLWS_WITH_LIBEVENT=OFF \

|

||||

-DLWS_IPV6=ON \

|

||||

-DCMAKE_BUILD_TYPE=Release \

|

||||

-DCMAKE_INSTALL_PREFIX=/usr \

|

||||

-DCMAKE_C_FLAGS="-fPIC" && \

|

||||

make -j$(nproc) && \

|

||||

make install && \

|

||||

rm -rf /tmp/libwebsockets

|

||||

|

||||

# Copy only submodule configuration and git directory

|

||||

COPY .gitmodules /build/.gitmodules

|

||||

COPY .git /build/.git

|

||||

|

||||

# Clean up any stale submodule references (nips directory is not a submodule)

|

||||

RUN git rm --cached nips 2>/dev/null || true

|

||||

|

||||

# Initialize submodules (cached unless .gitmodules changes)

|

||||

RUN git submodule update --init --recursive

|

||||

|

||||

# Copy nostr_core_lib source files (cached unless nostr_core_lib changes)

|

||||

COPY nostr_core_lib /build/nostr_core_lib/

|

||||

|

||||

# Copy c_utils_lib source files (cached unless c_utils_lib changes)

|

||||

COPY c_utils_lib /build/c_utils_lib/

|

||||

|

||||

# Build c_utils_lib with MUSL-compatible flags (cached unless c_utils_lib changes)

|

||||

RUN cd c_utils_lib && \

|

||||

sed -i 's/CFLAGS = -Wall -Wextra -std=c99 -O2 -g/CFLAGS = -U_FORTIFY_SOURCE -D_FORTIFY_SOURCE=0 -Wall -Wextra -std=c99 -O2 -g/' Makefile && \

|

||||

make clean && \

|

||||

make

|

||||

|

||||

# Build nostr_core_lib with required NIPs (cached unless nostr_core_lib changes)

|

||||

# Disable fortification in build.sh to prevent __*_chk symbol issues

|

||||

# NIPs: 001(Basic), 006(Keys), 013(PoW), 017(DMs), 019(Bech32), 044(Encryption), 059(Gift Wrap - required by NIP-17)

|

||||

RUN cd nostr_core_lib && \

|

||||

chmod +x build.sh && \

|

||||

sed -i 's/CFLAGS="-Wall -Wextra -std=c99 -fPIC -O2"/CFLAGS="-U_FORTIFY_SOURCE -D_FORTIFY_SOURCE=0 -Wall -Wextra -std=c99 -fPIC -O2"/' build.sh && \

|

||||

rm -f *.o *.a 2>/dev/null || true && \

|

||||

./build.sh --nips=1,6,13,17,19,44,59

|

||||

|

||||

# Copy c-relay source files LAST (only this layer rebuilds on source changes)

|

||||

COPY src/ /build/src/

|

||||

COPY Makefile /build/Makefile

|

||||

|

||||

# Build c-relay with full static linking (only rebuilds when src/ changes)

|

||||

# Disable fortification to avoid __*_chk symbols that don't exist in MUSL

|

||||

# Use conditional compilation flags based on DEBUG_BUILD argument

|

||||

RUN if [ "$DEBUG_BUILD" = "true" ]; then \

|

||||

CFLAGS="-g -O0 -DDEBUG"; \

|

||||

STRIP_CMD=""; \

|

||||

echo "Building with DEBUG symbols enabled"; \

|

||||

else \

|

||||

CFLAGS="-O2"; \

|

||||

STRIP_CMD="strip /build/c_relay_static"; \

|

||||

echo "Building optimized production binary"; \

|

||||

fi && \

|

||||

gcc -static $CFLAGS -Wall -Wextra -std=c99 \

|

||||

-U_FORTIFY_SOURCE -D_FORTIFY_SOURCE=0 \

|

||||

-I. -Ic_utils_lib/src -Inostr_core_lib -Inostr_core_lib/nostr_core \

|

||||

-Inostr_core_lib/cjson -Inostr_core_lib/nostr_websocket \

|

||||

src/main.c src/config.c src/dm_admin.c src/request_validator.c \

|

||||

src/nip009.c src/nip011.c src/nip013.c src/nip040.c src/nip042.c \

|

||||

src/websockets.c src/subscriptions.c src/api.c src/embedded_web_content.c \

|

||||

-o /build/c_relay_static \

|

||||

c_utils_lib/libc_utils.a \

|

||||

nostr_core_lib/libnostr_core_x64.a \

|

||||

-lwebsockets -lssl -lcrypto -lsqlite3 -lsecp256k1 \

|

||||

-lcurl -lz -lpthread -lm -ldl && \

|

||||

eval "$STRIP_CMD"

|

||||

|

||||

# Verify it's truly static

|

||||

RUN echo "=== Binary Information ===" && \

|

||||

file /build/c_relay_static && \

|

||||

ls -lh /build/c_relay_static && \

|

||||

echo "=== Checking for dynamic dependencies ===" && \

|

||||

(ldd /build/c_relay_static 2>&1 || echo "Binary is static") && \

|

||||

echo "=== Build complete ==="

|

||||

|

||||

# Output stage - just the binary

|

||||

FROM scratch AS output

|

||||

COPY --from=builder /build/c_relay_static /c_relay_static

|

||||

513

IMPLEMENT_API.md

513

IMPLEMENT_API.md

@@ -1,513 +0,0 @@

|

||||

# Implementation Plan: Enhanced Admin Event API Structure

|

||||

|

||||

## Current Issue

|

||||

|

||||

The current admin event routing at [`main.c:3248-3268`](src/main.c:3248) has a security vulnerability:

|

||||

|

||||

```c

|

||||

if (event_kind == 23455 || event_kind == 23456) {

|

||||

// Admin event processing

|

||||

int admin_result = process_admin_event_in_config(event, admin_error, sizeof(admin_error), wsi);

|

||||

} else {

|

||||

// Regular event storage and broadcasting

|

||||

}

|

||||

```

|

||||

|

||||

**Problem**: Any event with these kinds gets routed to admin processing, regardless of authorization. This allows unauthorized users to send admin events that could be processed as legitimate admin commands.

|

||||

|

||||

**Note**: Event kinds 33334 and 33335 are no longer used and have been removed from the admin event routing.

|

||||

|

||||

## Required Security Enhancement

|

||||

|

||||

Admin events must be validated for proper authorization BEFORE routing to admin processing:

|

||||

|

||||

1. **Relay Public Key Check**: Event must have a `p` tag equal to the relay's public key

|

||||

2. **Admin Signature Check**: Event must be signed by an authorized admin private key

|

||||

3. **Fallback to Regular Processing**: If authorization fails, treat as regular event (not admin event)

|

||||

|

||||

## Implementation Plan

|

||||

|

||||

### Phase 1: Add Admin Authorization Validation

|

||||

|

||||

#### 1.1 Create Consolidated Admin Authorization Function

|

||||

**Location**: [`src/main.c`](src/main.c) or [`src/config.c`](src/config.c)

|

||||

|

||||

```c

|

||||

/**

|

||||

* Consolidated admin event authorization validator

|

||||

* Implements defense-in-depth security for admin events

|

||||

*

|

||||

* @param event - The event to validate for admin authorization

|

||||

* @param error_message - Buffer for detailed error messages

|

||||

* @param error_size - Size of error message buffer

|

||||

* @return 0 if authorized, -1 if unauthorized, -2 if validation error

|

||||

*/

|

||||

int is_authorized_admin_event(cJSON* event, char* error_message, size_t error_size) {

|

||||

if (!event) {

|

||||

snprintf(error_message, error_size, "admin_auth: null event");

|

||||

return -2;

|

||||

}

|

||||

|

||||

// Extract event components

|

||||

cJSON* kind_obj = cJSON_GetObjectItem(event, "kind");

|

||||

cJSON* pubkey_obj = cJSON_GetObjectItem(event, "pubkey");

|

||||

cJSON* tags_obj = cJSON_GetObjectItem(event, "tags");

|

||||

|

||||

if (!kind_obj || !pubkey_obj || !tags_obj) {

|

||||

snprintf(error_message, error_size, "admin_auth: missing required fields");

|

||||

return -2;

|

||||

}

|

||||

|

||||

// Validation Layer 1: Kind Check

|

||||

int event_kind = (int)cJSON_GetNumberValue(kind_obj);

|

||||

if (event_kind != 23455 && event_kind != 23456) {

|

||||

snprintf(error_message, error_size, "admin_auth: not an admin event kind");

|

||||

return -1;

|

||||

}

|

||||

|

||||

// Validation Layer 2: Relay Targeting Check

|

||||

const char* relay_pubkey = get_config_value("relay_pubkey");

|

||||

if (!relay_pubkey) {

|

||||

snprintf(error_message, error_size, "admin_auth: relay pubkey not configured");

|

||||

return -2;

|

||||

}

|

||||

|

||||

// Check for 'p' tag targeting this relay

|

||||

int has_relay_target = 0;

|

||||

if (cJSON_IsArray(tags_obj)) {

|

||||

cJSON* tag = NULL;

|

||||

cJSON_ArrayForEach(tag, tags_obj) {

|

||||

if (cJSON_IsArray(tag) && cJSON_GetArraySize(tag) >= 2) {

|

||||

cJSON* tag_name = cJSON_GetArrayItem(tag, 0);

|

||||

cJSON* tag_value = cJSON_GetArrayItem(tag, 1);

|

||||

|

||||

if (cJSON_IsString(tag_name) && cJSON_IsString(tag_value)) {

|

||||

const char* name = cJSON_GetStringValue(tag_name);

|

||||

const char* value = cJSON_GetStringValue(tag_value);

|

||||

|

||||

if (strcmp(name, "p") == 0 && strcmp(value, relay_pubkey) == 0) {

|

||||

has_relay_target = 1;

|

||||

break;

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

if (!has_relay_target) {

|

||||

// Admin event for different relay - not unauthorized, just not for us

|

||||

snprintf(error_message, error_size, "admin_auth: admin event for different relay");

|

||||

return -1;

|

||||

}

|

||||

|

||||

// Validation Layer 3: Admin Signature Check (only if targeting this relay)

|

||||

const char* event_pubkey = cJSON_GetStringValue(pubkey_obj);

|

||||

if (!event_pubkey) {

|

||||

snprintf(error_message, error_size, "admin_auth: invalid pubkey format");

|

||||

return -2;

|

||||

}

|

||||

|

||||

const char* admin_pubkey = get_config_value("admin_pubkey");

|

||||

if (!admin_pubkey || strcmp(event_pubkey, admin_pubkey) != 0) {

|

||||

// This is the ONLY case where we log as "Unauthorized admin event attempt"

|

||||

// because it's targeting THIS relay but from wrong admin

|

||||

snprintf(error_message, error_size, "admin_auth: unauthorized admin for this relay");

|

||||

log_warning("SECURITY: Unauthorized admin event attempt for this relay");

|

||||

return -1;

|

||||

}

|

||||

|

||||

// All validation layers passed

|

||||

log_info("ADMIN: Admin event authorized");

|

||||

return 0;

|

||||

}

|

||||

|

||||

```

|

||||

|

||||

#### 1.2 Update Event Routing Logic

|

||||

**Location**: [`main.c:3248`](src/main.c:3248)

|

||||

|

||||

```c

|

||||

// Current problematic code:

|

||||

if (event_kind == 23455 || event_kind == 23456) {

|

||||

// Admin event processing

|

||||

int admin_result = process_admin_event_in_config(event, admin_error, sizeof(admin_error), wsi);

|

||||

} else {

|

||||

// Regular event storage and broadcasting

|

||||

}

|

||||

|

||||

// Enhanced secure code with consolidated authorization:

|

||||

if (result == 0) {

|

||||

cJSON* kind_obj = cJSON_GetObjectItem(event, "kind");

|

||||

if (kind_obj && cJSON_IsNumber(kind_obj)) {

|

||||

int event_kind = (int)cJSON_GetNumberValue(kind_obj);

|

||||

|

||||

// Check if this is an admin event

|

||||

if (event_kind == 23455 || event_kind == 23456) {

|

||||

// Use consolidated authorization check

|

||||

char auth_error[512] = {0};

|

||||

int auth_result = is_authorized_admin_event(event, auth_error, sizeof(auth_error));

|

||||

|

||||

if (auth_result == 0) {

|

||||

// Authorized admin event - process through admin API

|

||||

char admin_error[512] = {0};

|

||||

int admin_result = process_admin_event_in_config(event, admin_error, sizeof(admin_error), wsi);

|

||||

|

||||

if (admin_result != 0) {

|

||||

result = -1;

|

||||

strncpy(error_message, admin_error, sizeof(error_message) - 1);

|

||||

}

|

||||

// Admin events are NOT broadcast to subscriptions

|

||||

} else {

|

||||

// Unauthorized admin event - treat as regular event

|

||||

log_warning("Unauthorized admin event treated as regular event");

|

||||

if (store_event(event) != 0) {

|

||||

result = -1;

|

||||

strncpy(error_message, "error: failed to store event", sizeof(error_message) - 1);

|

||||

} else {

|

||||

broadcast_event_to_subscriptions(event);

|

||||

}

|

||||

}

|

||||

} else {

|

||||

// Regular event - normal processing

|

||||

if (store_event(event) != 0) {

|

||||

result = -1;

|

||||

strncpy(error_message, "error: failed to store event", sizeof(error_message) - 1);

|

||||

} else {

|

||||

broadcast_event_to_subscriptions(event);

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Phase 2: Enhanced Admin Event Processing

|

||||

|

||||

#### 2.1 Admin Event Validation in Config System

|

||||

**Location**: [`src/config.c`](src/config.c) - [`process_admin_event_in_config()`](src/config.c:2065)

|

||||

|

||||

Add additional validation within the admin processing function:

|

||||

|

||||

```c

|

||||

int process_admin_event_in_config(cJSON* event, char* error_buffer, size_t error_buffer_size, struct lws* wsi) {

|

||||

// Double-check authorization (defense in depth)

|

||||

if (!is_authorized_admin_event(event)) {

|

||||

snprintf(error_buffer, error_buffer_size, "unauthorized: not a valid admin event");

|

||||

return -1;

|

||||

}

|

||||

|

||||

// Continue with existing admin event processing...

|

||||

// ... rest of function unchanged

|

||||

}

|

||||

```

|

||||

|

||||

#### 2.2 Logging and Monitoring

|

||||

Add comprehensive logging for admin event attempts:

|

||||

|

||||

```c

|

||||

// In the routing logic - enhanced logging

|

||||

cJSON* kind_obj = cJSON_GetObjectItem(event, "kind");

|

||||

cJSON* pubkey_obj = cJSON_GetObjectItem(event, "pubkey");

|

||||

int event_kind = kind_obj ? cJSON_GetNumberValue(kind_obj) : -1;

|

||||

const char* event_pubkey = pubkey_obj ? cJSON_GetStringValue(pubkey_obj) : "unknown";

|

||||

|

||||

if (is_authorized_admin_event(event)) {

|

||||

char log_msg[256];

|

||||

snprintf(log_msg, sizeof(log_msg),

|

||||

"ADMIN EVENT: Authorized admin event (kind=%d) from pubkey=%.16s...",

|

||||

event_kind, event_pubkey);

|

||||

log_info(log_msg);

|

||||

} else if (event_kind == 23455 || event_kind == 23456) {

|

||||

// This catches unauthorized admin event attempts

|

||||

char log_msg[256];

|

||||

snprintf(log_msg, sizeof(log_msg),

|

||||

"SECURITY: Unauthorized admin event attempt (kind=%d) from pubkey=%.16s...",

|

||||

event_kind, event_pubkey);

|

||||

log_warning(log_msg);

|

||||

}

|

||||

```

|

||||

|

||||

## Phase 3: Unified Output Flow Architecture

|

||||

|

||||

### 3.1 Current Output Flow Analysis

|

||||

|

||||

After analyzing both [`main.c`](src/main.c) and [`config.c`](src/config.c), the **admin event responses already flow through the standard WebSocket output pipeline**. This is the correct architecture and requires no changes.

|

||||

|

||||

#### Standard WebSocket Output Pipeline

|

||||

|

||||

**Regular Events** ([`main.c:2978-2996`](src/main.c:2978)):

|

||||

```c

|

||||

// Database query responses

|

||||

unsigned char* buf = malloc(LWS_PRE + msg_len);

|

||||

memcpy(buf + LWS_PRE, msg_str, msg_len);

|

||||

lws_write(wsi, buf + LWS_PRE, msg_len, LWS_WRITE_TEXT);

|

||||

free(buf);

|

||||

```

|

||||

|

||||

**OK Responses** ([`main.c:3342-3375`](src/main.c:3342)):

|

||||

```c

|

||||

// Event processing results: ["OK", event_id, success_boolean, message]

|

||||

unsigned char *buf = malloc(LWS_PRE + response_len);

|

||||

memcpy(buf + LWS_PRE, response_str, response_len);

|

||||

lws_write(wsi, buf + LWS_PRE, response_len, LWS_WRITE_TEXT);

|

||||

free(buf);

|

||||

```

|

||||

|

||||

#### Admin Event Output Pipeline (Already Unified)

|

||||

|

||||

**Admin Responses** ([`config.c:2363-2414`](src/config.c:2363)):

|

||||

```c

|

||||

// Admin query responses use IDENTICAL pattern

|

||||

int send_websocket_response_data(struct lws* wsi, cJSON* response_data) {

|

||||

unsigned char* buf = malloc(LWS_PRE + response_len);

|

||||

memcpy(buf + LWS_PRE, response_str, response_len);

|

||||

|

||||

// Same lws_write() call as regular events

|

||||

int result = lws_write(wsi, buf + LWS_PRE, response_len, LWS_WRITE_TEXT);

|

||||

|

||||

free(buf);

|

||||

return result;

|

||||

}

|

||||

```

|

||||

|

||||

### 3.2 Unified Output Flow Confirmation

|

||||

|

||||

✅ **Admin responses already use the same WebSocket transmission mechanism as regular events**

|

||||

|

||||

✅ **Both admin and regular events use identical buffer allocation patterns**

|

||||

|

||||

✅ **Both admin and regular events use the same [`lws_write()`](src/config.c:2393) function**

|

||||

|

||||

✅ **Both admin and regular events follow the same cleanup patterns**

|

||||

|

||||

### 3.3 Output Flow Integration Points

|

||||

|

||||

The admin event processing in [`config.c:2436`](src/config.c:2436) already integrates correctly with the unified output system:

|

||||

|

||||

1. **Admin Query Processing** ([`config.c:2568-2583`](src/config.c:2568)):

|

||||

- Auth queries return structured JSON via [`send_websocket_response_data()`](src/config.c:2571)

|

||||

- System commands return status data via [`send_websocket_response_data()`](src/config.c:2631)

|

||||

|

||||

2. **Response Format Consistency**:

|

||||

- Admin responses use standard JSON format

|

||||

- Regular events use standard Nostr event format

|

||||

- Both transmitted through same WebSocket pipeline

|

||||

|

||||

3. **Error Handling Consistency**:

|

||||

- Admin errors returned via same WebSocket connection

|

||||

- Regular event errors returned via OK messages

|

||||

- Both use identical transmission mechanism

|

||||

|

||||

### 3.4 Key Architectural Benefits

|

||||

|

||||

**No Changes Required**: The output flow is already unified and correctly implemented.

|

||||

|

||||

**Security Separation**: Admin events are processed separately but responses flow through the same secure WebSocket channel.

|

||||

|

||||

**Performance Consistency**: Both admin and regular responses use the same optimized transmission path.

|

||||

|

||||

**Maintenance Simplicity**: Single WebSocket output pipeline reduces complexity and potential bugs.

|

||||

|

||||

### 3.5 Admin Event Flow Summary

|

||||

|

||||

```

|

||||

Admin Event Input → Authorization Check → Admin Processing → Unified WebSocket Output

|

||||

Regular Event Input → Validation → Storage + Broadcast → Unified WebSocket Output

|

||||

```

|

||||

|

||||

Both flows converge at the **Unified WebSocket Output** stage, which is already correctly implemented.

|

||||

|

||||

## Phase 4: Integration Points for Secure Admin Event Routing

|

||||

|

||||

### 4.1 Configuration System Integration

|

||||

|

||||

**Required Configuration Values**:

|

||||

- `admin_pubkey` - Public key of authorized administrator

|

||||

- `relay_pubkey` - Public key of this relay instance

|

||||

|

||||

**Integration Points**:

|

||||

1. [`get_config_value()`](src/config.c) - Used by authorization function

|

||||

2. [`get_relay_pubkey_cached()`](src/config.c) - Used for relay targeting validation

|

||||

3. Configuration loading during startup - Must ensure admin/relay pubkeys are available

|

||||

|

||||

### 4.3 Forward Declarations Required

|

||||

|

||||

**Location**: [`src/main.c`](src/main.c) - Add near other forward declarations (around line 230)

|

||||

|

||||

```c

|

||||

// Forward declarations for enhanced admin event authorization

|

||||

int is_authorized_admin_event(cJSON* event, char* error_message, size_t error_size);

|

||||

```

|

||||

|

||||

### 4.4 Error Handling Integration

|

||||

|

||||

**Enhanced Error Response System**:

|

||||

|

||||

```c

|

||||

// In main.c event processing - enhanced error handling for admin events

|

||||

if (auth_result != 0) {

|

||||

// Admin authorization failed - send detailed OK response

|

||||

cJSON* event_id = cJSON_GetObjectItem(event, "id");

|

||||

if (event_id && cJSON_IsString(event_id)) {

|

||||

cJSON* response = cJSON_CreateArray();

|

||||

cJSON_AddItemToArray(response, cJSON_CreateString("OK"));

|

||||

cJSON_AddItemToArray(response, cJSON_CreateString(cJSON_GetStringValue(event_id)));

|

||||

cJSON_AddItemToArray(response, cJSON_CreateBool(0)); // Failed

|

||||

cJSON_AddItemToArray(response, cJSON_CreateString(auth_error));

|

||||

|

||||

// Send via standard WebSocket output pipeline

|

||||

char *response_str = cJSON_Print(response);

|

||||

if (response_str) {

|

||||

size_t response_len = strlen(response_str);

|

||||

unsigned char *buf = malloc(LWS_PRE + response_len);

|

||||

if (buf) {

|

||||

memcpy(buf + LWS_PRE, response_str, response_len);

|

||||

lws_write(wsi, buf + LWS_PRE, response_len, LWS_WRITE_TEXT);

|

||||

free(buf);

|

||||

}

|

||||

free(response_str);

|

||||

}

|

||||

cJSON_Delete(response);

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### 4.5 Logging Integration Points

|

||||

|

||||

**Console Logging**: Uses existing [`log_warning()`](src/main.c:993), [`log_info()`](src/main.c:972) functions

|

||||

|

||||

**Security Event Categories**:

|

||||

- Admin authorization success logged via `log_info()`

|

||||

- Admin authorization failures logged via `log_warning()`

|

||||

- Admin event processing logged via existing admin logging

|

||||

|

||||

## Phase 5: Detailed Function Specifications

|

||||

|

||||

### 5.1 Core Authorization Function

|

||||

|

||||

**Function**: `is_authorized_admin_event()`

|

||||

**Location**: [`src/main.c`](src/main.c) or [`src/config.c`](src/config.c)

|

||||

**Dependencies**:

|

||||

- `get_config_value()` for admin/relay pubkeys

|

||||

- `log_warning()` and `log_info()` for logging

|

||||

- `cJSON` library for event parsing

|

||||

|

||||

**Return Values**:

|

||||

- `0` - Event is authorized for admin processing

|

||||

- `-1` - Event is unauthorized (treat as regular event)

|

||||

- `-2` - Validation error (malformed event)

|

||||

|

||||

**Error Handling**: Detailed error messages in provided buffer for client feedback

|

||||

|

||||

### 5.2 Enhanced Event Routing

|

||||

|

||||

**Location**: [`main.c:3248-3340`](src/main.c:3248)

|

||||

**Integration**: Replaces existing admin event routing logic

|

||||

**Dependencies**:

|

||||

- `is_authorized_admin_event()` for authorization

|

||||

- `process_admin_event_in_config()` for admin processing

|

||||

- `store_event()` and `broadcast_event_to_subscriptions()` for regular events

|

||||

|

||||

**Security Features**:

|

||||

- Graceful degradation for unauthorized admin events

|

||||

- Comprehensive logging of authorization attempts

|

||||

- No broadcast of admin events to subscriptions

|

||||

- Detailed error responses for failed authorization

|

||||

|

||||

### 5.4 Defense-in-Depth Validation

|

||||

|

||||

**Primary Validation**: In main event routing logic

|

||||

**Secondary Validation**: In `process_admin_event_in_config()` function

|

||||

**Tertiary Validation**: In individual admin command handlers

|

||||

|

||||

**Validation Layers**:

|

||||

1. **Kind Check** - Must be admin event kind (23455/23456)

|

||||

2. **Relay Targeting Check** - Must have 'p' tag with this relay's pubkey

|

||||

3. **Admin Signature Check** - Must be signed by authorized admin (only if targeting this relay)

|

||||

4. **Processing Check** - Additional validation in admin handlers

|

||||

|

||||

**Security Logic**:

|

||||

- If no 'p' tag for this relay → Admin event for different relay (not unauthorized)

|

||||

- If 'p' tag for this relay + wrong admin signature → "Unauthorized admin event attempt"

|

||||

|

||||

## Phase 6: Event Flow Documentation

|

||||

|

||||

### 6.1 Complete Event Processing Flow

|

||||

|

||||

```

|

||||

┌─────────────────┐

|

||||

│ WebSocket Input │

|

||||

└─────────┬───────┘

|

||||

│

|

||||

▼

|

||||

┌─────────────────┐

|

||||

│ Unified │

|

||||

│ Validation │ ← nostr_validate_unified_request()

|

||||

└─────────┬───────┘

|

||||

│

|

||||

▼

|

||||

┌─────────────────┐

|

||||

│ Kind-Based │

|

||||

│ Routing Check │ ← Check if kind 23455/23456

|

||||

└─────────┬───────┘

|

||||

│

|

||||

┌────▼────┐

|

||||

│ Admin? │

|

||||

└────┬────┘

|

||||

│

|

||||

┌─────▼─────┐ ┌─────────────┐

|

||||

│ YES │ │ NO │

|

||||

│ │ │ │

|

||||

▼ │ ▼ │

|

||||

┌─────────────┐ │ ┌─────────────┐ │

|

||||

│ Admin │ │ │ Regular │ │

|

||||

│ Authorization│ │ │ Event │ │

|

||||

│ Check │ │ │ Processing │ │

|

||||

└─────┬───────┘ │ └─────┬───────┘ │

|

||||

│ │ │ │

|

||||

┌────▼────┐ │ ▼ │

|

||||

│Authorized?│ │ ┌─────────────┐ │

|

||||

└────┬────┘ │ │ store_event()│ │

|

||||

│ │ │ + │ │

|

||||

┌─────▼─────┐ │ │ broadcast() │ │

|

||||

│ YES NO │ │ └─────┬───────┘ │

|

||||

│ │ │ │ │ │ │

|

||||

│ ▼ ▼ │ │ ▼ │

|

||||

│┌─────┐┌───┴┐ │ ┌─────────────┐ │

|

||||

││Admin││Treat│ │ │ WebSocket │ │

|

||||

││API ││as │ │ │ OK Response │ │

|

||||

││ ││Reg │ │ └─────────────┘ │

|

||||

│└──┬──┘└───┬┘ │ │

|

||||

│ │ │ │ │

|

||||

│ ▼ │ │ │

|

||||

│┌─────────┐│ │ │

|

||||

││WebSocket││ │ │

|

||||

││Response ││ │ │

|

||||

│└─────────┘│ │ │

|

||||

└───────────┴───┘ │

|

||||

│ │

|

||||

└───────────────────────────┘

|

||||

│

|

||||

▼

|

||||

┌─────────────┐

|

||||

│ Unified │

|

||||

│ WebSocket │

|

||||

│ Output │

|

||||

└─────────────┘

|

||||

```

|

||||

|

||||

### 6.2 Security Decision Points

|

||||

|

||||

1. **Event Kind Check** - Identifies potential admin events

|

||||

2. **Authorization Validation** - Three-layer security check

|

||||

3. **Routing Decision** - Admin API vs Regular processing

|

||||

4. **Response Generation** - Unified output pipeline

|

||||

5. **Audit Logging** - Security event tracking

|

||||

|

||||

### 6.3 Error Handling Paths

|

||||

|

||||

**Validation Errors**: Return detailed error messages via OK response

|

||||

**Authorization Failures**: Log security event + treat as regular event

|

||||

**Processing Errors**: Return admin-specific error responses

|

||||

**System Errors**: Fallback to standard error handling

|

||||

|

||||

This completes the comprehensive implementation plan for the enhanced admin event API structure with unified output flow architecture.

|

||||

107

Makefile

107

Makefile

@@ -2,15 +2,16 @@

|

||||

|

||||

CC = gcc

|

||||

CFLAGS = -Wall -Wextra -std=c99 -g -O2

|

||||

INCLUDES = -I. -Inostr_core_lib -Inostr_core_lib/nostr_core -Inostr_core_lib/cjson -Inostr_core_lib/nostr_websocket

|

||||

LIBS = -lsqlite3 -lwebsockets -lz -ldl -lpthread -lm -L/usr/local/lib -lsecp256k1 -lssl -lcrypto -L/usr/local/lib -lcurl

|

||||

INCLUDES = -I. -Ic_utils_lib/src -Inostr_core_lib -Inostr_core_lib/nostr_core -Inostr_core_lib/cjson -Inostr_core_lib/nostr_websocket

|

||||

LIBS = -lsqlite3 -lwebsockets -lz -ldl -lpthread -lm -L/usr/local/lib -lsecp256k1 -lssl -lcrypto -L/usr/local/lib -lcurl -Lc_utils_lib -lc_utils

|

||||

|

||||

# Build directory

|

||||

BUILD_DIR = build

|

||||

|

||||

# Source files

|

||||

MAIN_SRC = src/main.c src/config.c src/request_validator.c

|

||||

MAIN_SRC = src/main.c src/config.c src/dm_admin.c src/request_validator.c src/nip009.c src/nip011.c src/nip013.c src/nip040.c src/nip042.c src/websockets.c src/subscriptions.c src/api.c src/embedded_web_content.c

|

||||

NOSTR_CORE_LIB = nostr_core_lib/libnostr_core_x64.a

|

||||

C_UTILS_LIB = c_utils_lib/libc_utils.a

|

||||

|

||||

# Architecture detection

|

||||

ARCH = $(shell uname -m)

|

||||

@@ -32,14 +33,27 @@ $(BUILD_DIR):

|

||||

mkdir -p $(BUILD_DIR)

|

||||

|

||||

# Check if nostr_core_lib is built

|

||||

# Explicitly specify NIPs to ensure NIP-44 (encryption) is included

|

||||

# NIPs: 1 (basic), 6 (keys), 13 (PoW), 17 (DMs), 19 (bech32), 44 (encryption), 59 (gift wrap)

|

||||

$(NOSTR_CORE_LIB):

|

||||

@echo "Building nostr_core_lib..."

|

||||

cd nostr_core_lib && ./build.sh

|

||||

@echo "Building nostr_core_lib with required NIPs (including NIP-44 for encryption)..."

|

||||

cd nostr_core_lib && ./build.sh --nips=1,6,13,17,19,44,59

|

||||

|

||||

# Generate version.h from git tags

|

||||

src/version.h:

|

||||

@if [ -d .git ]; then \

|

||||

echo "Generating version.h from git tags..."; \

|

||||

# Check if c_utils_lib is built

|

||||

$(C_UTILS_LIB):

|

||||

@echo "Building c_utils_lib..."

|

||||

cd c_utils_lib && ./build.sh lib

|

||||

|

||||

# Update main.h version information (requires main.h to exist)

|

||||

src/main.h:

|

||||

@if [ ! -f src/main.h ]; then \

|

||||

echo "ERROR: src/main.h not found!"; \

|

||||

echo "Please ensure src/main.h exists with relay metadata."; \

|

||||

echo "Copy from a backup or create manually with proper relay configuration."; \

|

||||

exit 1; \

|

||||

fi; \

|

||||

if [ -d .git ]; then \

|

||||

echo "Updating main.h version information from git tags..."; \

|

||||

RAW_VERSION=$$(git describe --tags --always 2>/dev/null || echo "unknown"); \

|

||||

if echo "$$RAW_VERSION" | grep -q "^v[0-9]"; then \

|

||||

CLEAN_VERSION=$$(echo "$$RAW_VERSION" | sed 's/^v//' | cut -d- -f1); \

|

||||

@@ -51,54 +65,34 @@ src/version.h:

|

||||

VERSION="v0.0.0"; \

|

||||

MAJOR=0; MINOR=0; PATCH=0; \

|

||||

fi; \

|

||||

echo "/* Auto-generated version information */" > src/version.h; \

|

||||

echo "#ifndef VERSION_H" >> src/version.h; \

|

||||

echo "#define VERSION_H" >> src/version.h; \

|

||||

echo "" >> src/version.h; \

|

||||

echo "#define VERSION \"$$VERSION\"" >> src/version.h; \

|

||||

echo "#define VERSION_MAJOR $$MAJOR" >> src/version.h; \

|

||||

echo "#define VERSION_MINOR $$MINOR" >> src/version.h; \

|

||||

echo "#define VERSION_PATCH $$PATCH" >> src/version.h; \

|

||||

echo "" >> src/version.h; \

|

||||

echo "#endif /* VERSION_H */" >> src/version.h; \

|

||||

echo "Generated version.h with clean version: $$VERSION"; \

|

||||

elif [ ! -f src/version.h ]; then \

|

||||

echo "Git not available and version.h missing, creating fallback version.h..."; \

|

||||

VERSION="v0.0.0"; \

|

||||

echo "/* Auto-generated version information */" > src/version.h; \

|

||||

echo "#ifndef VERSION_H" >> src/version.h; \

|

||||

echo "#define VERSION_H" >> src/version.h; \

|

||||

echo "" >> src/version.h; \

|

||||

echo "#define VERSION \"$$VERSION\"" >> src/version.h; \

|

||||

echo "#define VERSION_MAJOR 0" >> src/version.h; \

|

||||

echo "#define VERSION_MINOR 0" >> src/version.h; \

|

||||

echo "#define VERSION_PATCH 0" >> src/version.h; \

|

||||

echo "" >> src/version.h; \

|

||||

echo "#endif /* VERSION_H */" >> src/version.h; \

|

||||

echo "Created fallback version.h with version: $$VERSION"; \

|

||||

echo "Updating version information in existing main.h..."; \

|

||||

sed -i "s/#define VERSION \".*\"/#define VERSION \"$$VERSION\"/g" src/main.h; \

|

||||

sed -i "s/#define VERSION_MAJOR [0-9]*/#define VERSION_MAJOR $$MAJOR/g" src/main.h; \

|

||||

sed -i "s/#define VERSION_MINOR [0-9]*/#define VERSION_MINOR $$MINOR/g" src/main.h; \

|

||||

sed -i "s/#define VERSION_PATCH [0-9]*/#define VERSION_PATCH $$PATCH/g" src/main.h; \

|

||||

echo "Updated main.h version to: $$VERSION"; \

|

||||

else \

|

||||

echo "Git not available, preserving existing version.h"; \

|

||||

echo "Git not available, preserving existing main.h version information"; \

|

||||

fi

|

||||

|

||||

# Force version.h regeneration (useful for development)

|

||||

# Update main.h version information (requires existing main.h)

|

||||

force-version:

|

||||

@echo "Force regenerating version.h..."

|

||||

@rm -f src/version.h

|

||||

@$(MAKE) src/version.h

|

||||

@echo "Force updating main.h version information..."

|

||||

@$(MAKE) src/main.h

|

||||

|

||||

# Build the relay

|

||||

$(TARGET): $(BUILD_DIR) src/version.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB)

|

||||

$(TARGET): $(BUILD_DIR) src/main.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB) $(C_UTILS_LIB)

|

||||

@echo "Compiling C-Relay for architecture: $(ARCH)"

|

||||

$(CC) $(CFLAGS) $(INCLUDES) $(MAIN_SRC) -o $(TARGET) $(NOSTR_CORE_LIB) $(LIBS)

|

||||

$(CC) $(CFLAGS) $(INCLUDES) $(MAIN_SRC) -o $(TARGET) $(NOSTR_CORE_LIB) $(C_UTILS_LIB) $(LIBS)

|

||||

@echo "Build complete: $(TARGET)"

|

||||

|

||||

# Build for specific architectures

|

||||

x86: $(BUILD_DIR) src/version.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB)

|

||||

x86: $(BUILD_DIR) src/main.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB) $(C_UTILS_LIB)

|

||||

@echo "Building C-Relay for x86_64..."

|

||||

$(CC) $(CFLAGS) $(INCLUDES) $(MAIN_SRC) -o $(BUILD_DIR)/c_relay_x86 $(NOSTR_CORE_LIB) $(LIBS)

|

||||

$(CC) $(CFLAGS) $(INCLUDES) $(MAIN_SRC) -o $(BUILD_DIR)/c_relay_x86 $(NOSTR_CORE_LIB) $(C_UTILS_LIB) $(LIBS)

|

||||

@echo "Build complete: $(BUILD_DIR)/c_relay_x86"

|

||||

|

||||

arm64: $(BUILD_DIR) src/version.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB)

|

||||

arm64: $(BUILD_DIR) src/main.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB) $(C_UTILS_LIB)

|

||||

@echo "Cross-compiling C-Relay for ARM64..."

|

||||

@if ! command -v aarch64-linux-gnu-gcc >/dev/null 2>&1; then \

|

||||

echo "ERROR: ARM64 cross-compiler not found."; \

|

||||

@@ -122,7 +116,7 @@ arm64: $(BUILD_DIR) src/version.h src/sql_schema.h $(MAIN_SRC) $(NOSTR_CORE_LIB)

|

||||

fi

|

||||

@echo "Using aarch64-linux-gnu-gcc with ARM64 libraries..."

|

||||

PKG_CONFIG_PATH=/usr/lib/aarch64-linux-gnu/pkgconfig:/usr/share/pkgconfig \

|

||||

aarch64-linux-gnu-gcc $(CFLAGS) $(INCLUDES) $(MAIN_SRC) -o $(BUILD_DIR)/c_relay_arm64 $(NOSTR_CORE_LIB) \

|

||||

aarch64-linux-gnu-gcc $(CFLAGS) $(INCLUDES) $(MAIN_SRC) -o $(BUILD_DIR)/c_relay_arm64 $(NOSTR_CORE_LIB) $(C_UTILS_LIB) \

|

||||

-L/usr/lib/aarch64-linux-gnu $(LIBS)

|

||||

@echo "Build complete: $(BUILD_DIR)/c_relay_arm64"

|

||||

|

||||

@@ -171,12 +165,12 @@ init-db:

|

||||

# Clean build artifacts

|

||||

clean:

|

||||

rm -rf $(BUILD_DIR)

|

||||

rm -f src/version.h

|

||||

@echo "Clean complete"

|

||||

|

||||

# Clean everything including nostr_core_lib

|

||||

# Clean everything including nostr_core_lib and c_utils_lib

|

||||

clean-all: clean

|

||||

cd nostr_core_lib && make clean 2>/dev/null || true

|

||||

cd c_utils_lib && make clean 2>/dev/null || true

|

||||

|

||||

# Install dependencies (Ubuntu/Debian)

|

||||

install-deps:

|

||||

@@ -210,6 +204,23 @@ help:

|

||||

@echo " make check-toolchain # Check what compilers are available"

|

||||

@echo " make test # Run tests"

|

||||

@echo " make init-db # Set up database"

|

||||

@echo " make force-version # Force regenerate version.h from git"

|

||||

@echo " make force-version # Force regenerate main.h from git"

|

||||

|

||||

# Build fully static MUSL binaries using Docker

|

||||

static-musl-x86_64:

|

||||

@echo "Building fully static MUSL binary for x86_64..."

|

||||

docker buildx build --platform linux/amd64 -f examples/deployment/static-builder.Dockerfile -t c-relay-static-builder-x86_64 --load .

|

||||

docker run --rm -v $(PWD)/build:/output c-relay-static-builder-x86_64 sh -c "cp /c_relay_static_musl_x86_64 /output/"

|

||||

@echo "Static binary created: build/c_relay_static_musl_x86_64"

|

||||

|

||||

static-musl-arm64:

|

||||

@echo "Building fully static MUSL binary for ARM64..."

|

||||

docker buildx build --platform linux/arm64 -f examples/deployment/static-builder.Dockerfile -t c-relay-static-builder-arm64 --load .

|

||||

docker run --rm -v $(PWD)/build:/output c-relay-static-builder-arm64 sh -c "cp /c_relay_static_musl_x86_64 /output/c_relay_static_musl_arm64"

|

||||

@echo "Static binary created: build/c_relay_static_musl_arm64"

|

||||

|

||||

static-musl: static-musl-x86_64 static-musl-arm64

|

||||

@echo "Built static MUSL binaries for both architectures"

|

||||

|

||||

.PHONY: static-musl-x86_64 static-musl-arm64 static-musl

|

||||

.PHONY: all x86 arm64 test init-db clean clean-all install-deps install-cross-tools install-arm64-deps check-toolchain help force-version

|

||||

65

NOSTR_RELEASE.md

Normal file

65

NOSTR_RELEASE.md

Normal file

@@ -0,0 +1,65 @@

|

||||

# Relay

|

||||

|

||||

I am releasing the code for the nostr relay that I wrote use myself. The code is free for anyone to use in any way that they wish.

|

||||

|

||||

Some of the features of this relay are conventional, and some are unconventional.

|

||||

|

||||

## The conventional

|

||||

|

||||

This relay is written in C99 with a sqlite database.

|

||||

|

||||

It implements the following NIPs.

|

||||

|

||||

- [x] NIP-01: Basic protocol flow implementation

|

||||

- [x] NIP-09: Event deletion

|

||||

- [x] NIP-11: Relay information document

|

||||

- [x] NIP-13: Proof of Work

|

||||

- [x] NIP-15: End of Stored Events Notice

|

||||

- [x] NIP-20: Command Results

|

||||

- [x] NIP-33: Parameterized Replaceable Events

|

||||

- [x] NIP-40: Expiration Timestamp

|

||||

- [x] NIP-42: Authentication of clients to relays

|

||||

- [x] NIP-45: Counting results

|

||||

- [x] NIP-50: Keywords filter

|

||||

- [x] NIP-70: Protected Events

|

||||

|

||||

## The unconventional

|

||||

|

||||

### The binaries are fully self contained.

|

||||

|

||||

It should just run in linux without having to worry about what you have on your system. I want to download and run. No docker. No dependency hell.

|

||||

|

||||

I'm not bothering with other operating systems.

|

||||

|

||||

### The relay is a full nostr citizen with it's own public and private keys.

|

||||

|

||||

For example, you can see my relay's profile (wss://relay.laantungir.net) running here:

|

||||

|

||||

[Primal link](https://primal.net/p/nprofile1qqswn2jsmm8lq8evas0v9vhqkdpn9nuujt90mtz60nqgsxndy66es4qjjnhr7)

|

||||

|

||||

What this means in practice is that when you start the relay, it generates keys for itself, and for it's administrator (You can specify these if you wish)

|

||||

|

||||

Now the program and the administrator can have verifed communication between the two, simply by using nostr events. For example, the administrator can send DMs to the relay, asking it's status, and changing it's configuration through any client that can handle nip17 DMs. The relay can also send notifications to the administrator about it's current status, or it can publish it's status on a regular schedule directly to NOSTR as kind-1 notes.

|

||||

|

||||

Also included is a more standard administrative web front end. This front end communicates to the relay using an extensive api, which again is nostr events signed by the administrator. You can completely control the relay through signed nostr events.

|

||||

|

||||

## Screenshots

|

||||

|

||||

|

||||

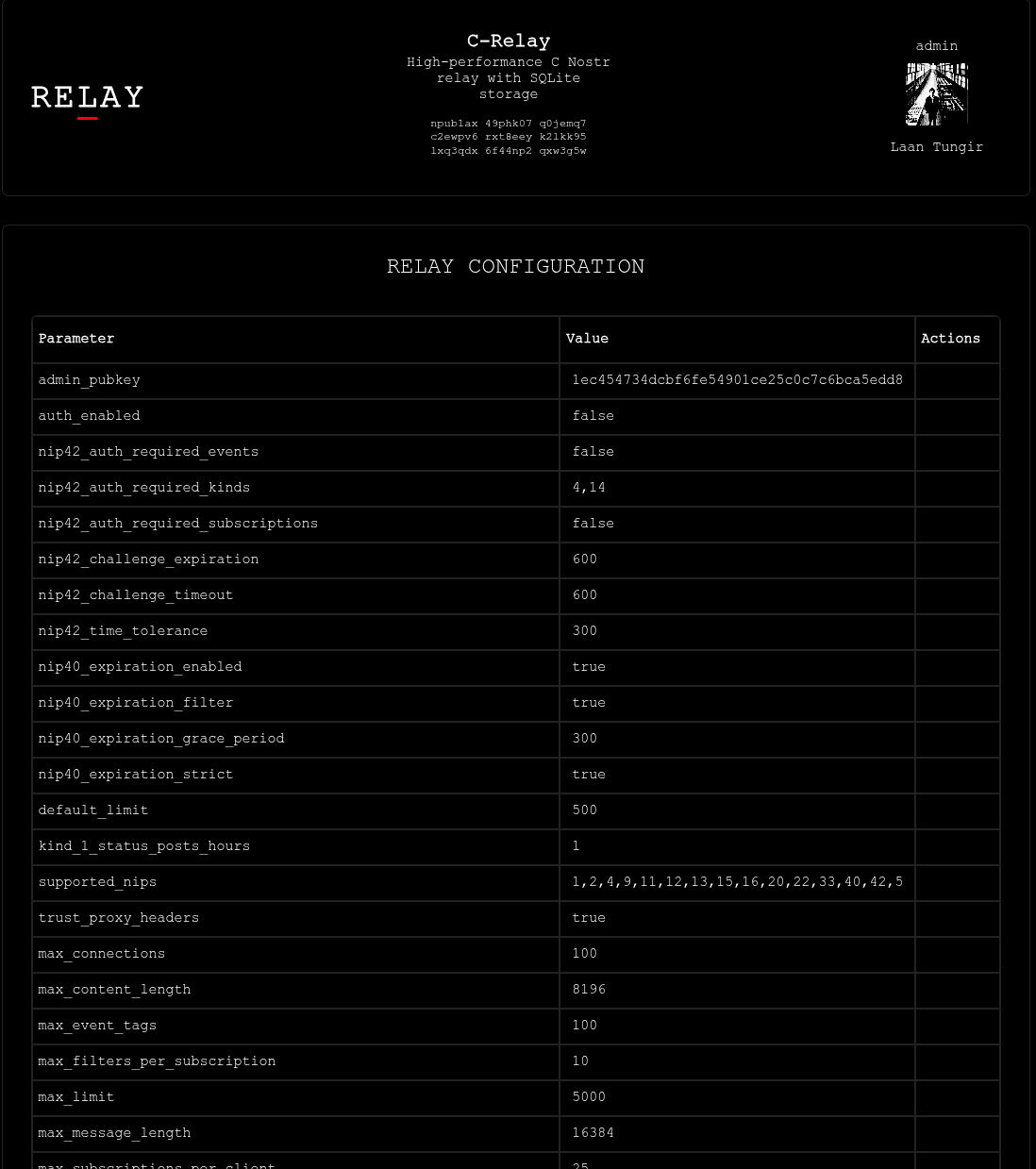

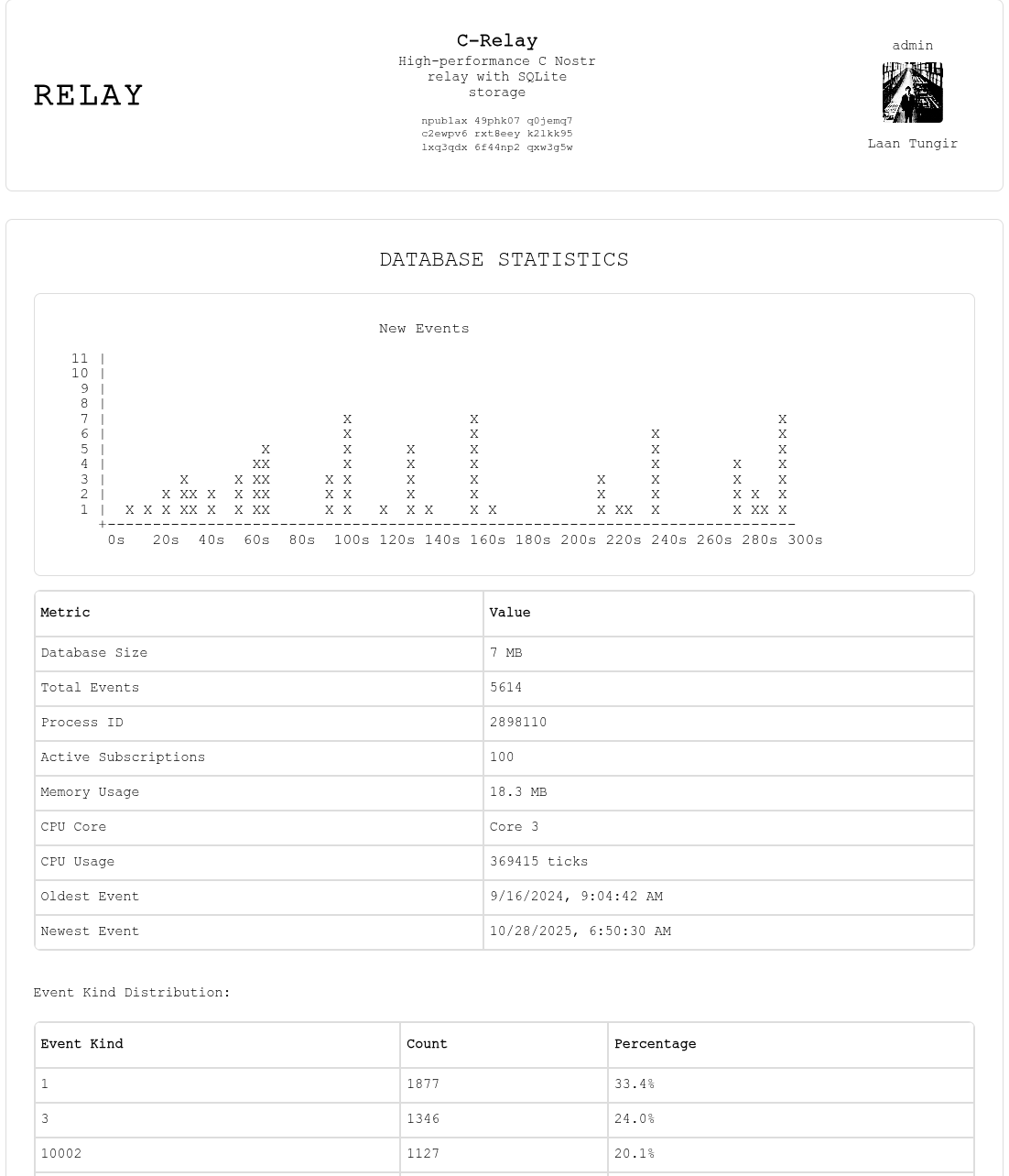

Main page with real time updates.

|

||||

|

||||

|

||||

Set your configuration preferences.

|

||||

|

||||

|

||||

View current subscriptions

|

||||

|

||||

|

||||

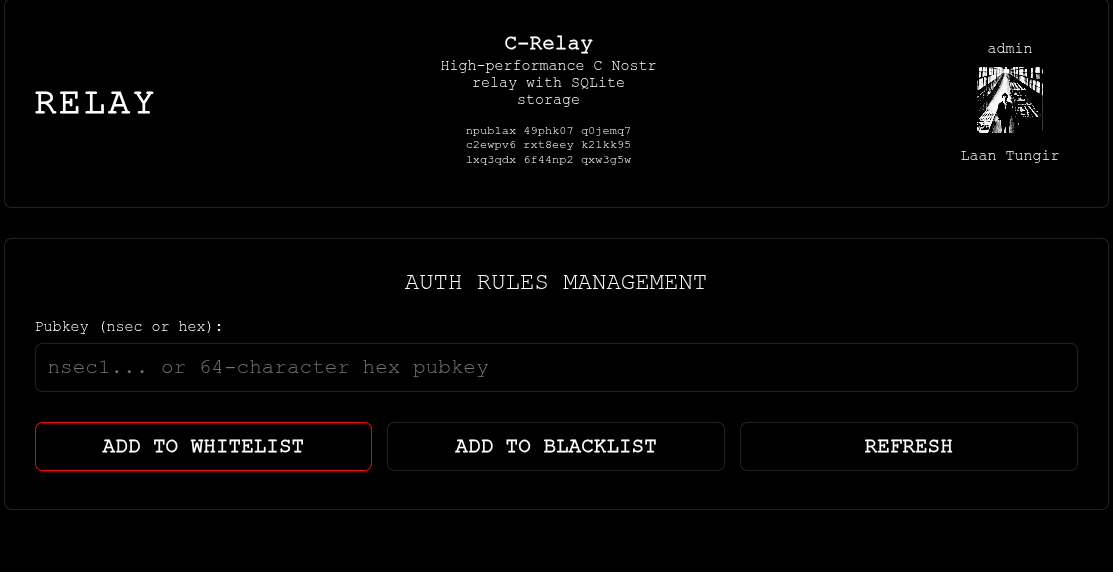

Add npubs to white or black lists.

|

||||

|

||||

|

||||

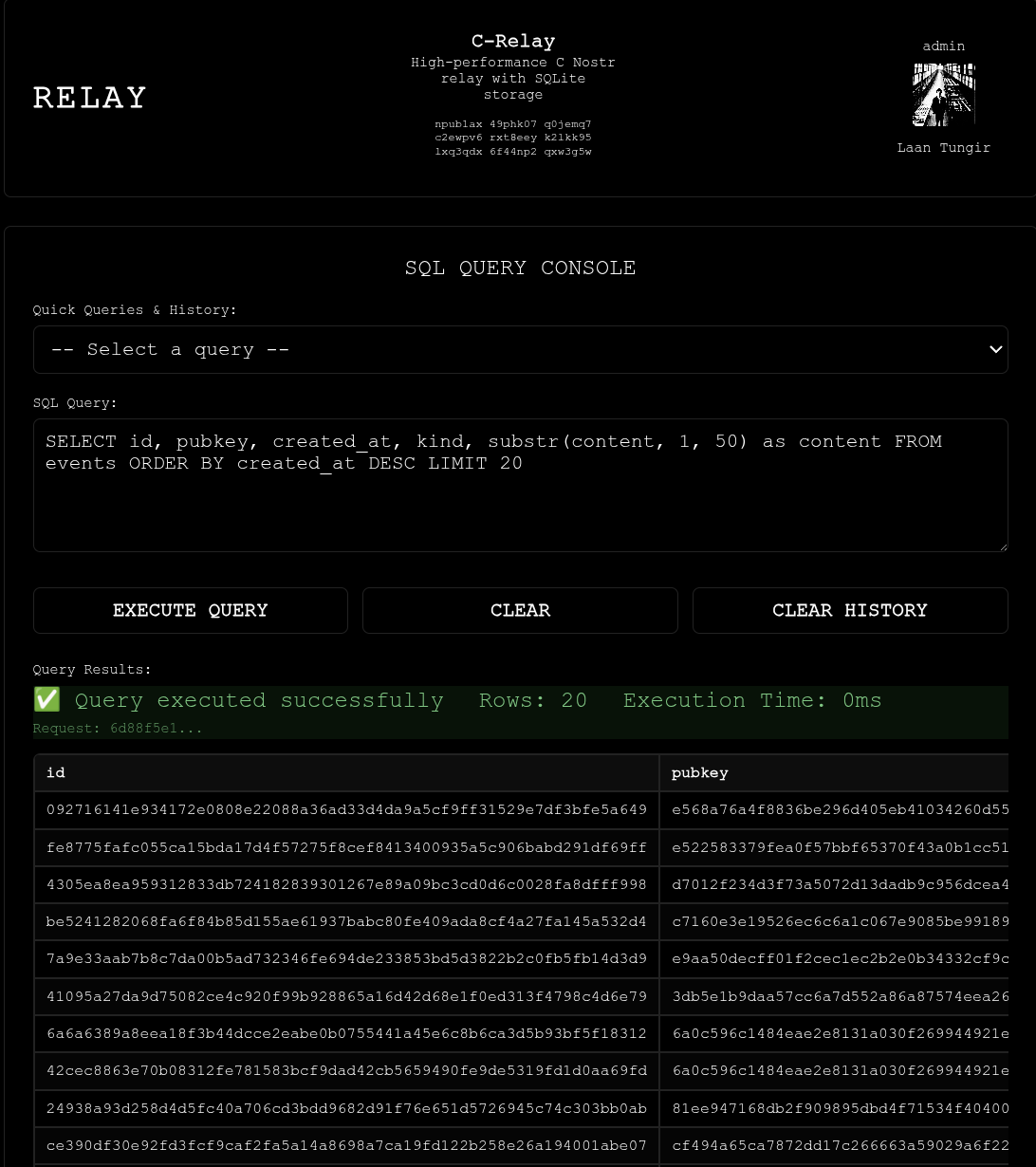

Run sql queries on the database.

|

||||

|

||||

|

||||

Light mode.

|

||||

|

||||

345

README.md

345

README.md

@@ -1,8 +1,8 @@

|

||||

# C Nostr Relay - Event-Based Configuration System

|

||||

# C-Nostr Relay

|

||||

|

||||

A high-performance Nostr relay implemented in C with SQLite backend, featuring a revolutionary **zero-configuration** approach using event-based configuration management.

|

||||

A high-performance Nostr relay implemented in C with SQLite backend, featuring nostr event-based management.

|

||||

|

||||

## 📜 Supported NIPs

|

||||

## Supported NIPs

|

||||

|

||||

<!--

|

||||

NOTE FOR ASSISTANTS: When updating the NIPs checklist below, ONLY change [ ] to [x] to mark as complete.

|

||||

@@ -18,13 +18,89 @@ Do NOT modify the formatting, add emojis, or change the text. Keep the simple fo

|

||||

- [x] NIP-33: Parameterized Replaceable Events

|

||||

- [x] NIP-40: Expiration Timestamp

|

||||

- [x] NIP-42: Authentication of clients to relays

|

||||

- [ ] NIP-45: Counting results

|

||||

- [ ] NIP-50: Keywords filter

|

||||

- [ ] NIP-70: Protected Events

|

||||

- [x] NIP-45: Counting results

|

||||

- [x] NIP-50: Keywords filter

|

||||

- [x] NIP-70: Protected Events

|

||||

|

||||

## 🔧 Administrator API

|

||||

## Quick Start

|

||||

|

||||

C-Relay uses an innovative **event-based administration system** where all configuration and management commands are sent as signed Nostr events using the admin private key generated during first startup. All admin commands use **tag-based parameters** for simplicity and compatibility.

|

||||

Get your C-Relay up and running in minutes with a static binary (no dependencies required):

|

||||

|

||||

### 1. Download Static Binary

|

||||

|

||||

Download the latest static release from the [releases page](https://git.laantungir.net/laantungir/c-relay/releases):

|

||||

|

||||

```bash

|

||||

# Static binary - works on all Linux distributions (no dependencies)

|

||||

wget https://git.laantungir.net/laantungir/c-relay/releases/download/v0.6.0/c-relay-v0.6.0-linux-x86_64-static

|

||||

chmod +x c-relay-v0.6.0-linux-x86_64-static

|

||||

mv c-relay-v0.6.0-linux-x86_64-static c-relay

|

||||

```

|

||||

|

||||

### 2. Start the Relay

|

||||

|

||||

Simply run the binary - no configuration files needed:

|

||||

|

||||

```bash

|

||||

./c-relay

|

||||

```

|

||||

|

||||

On first startup, you'll see:

|

||||

- **Admin Private Key**: Save this securely! You'll need it for administration

|

||||

- **Relay Public Key**: Your relay's identity on the Nostr network

|

||||

- **Port Information**: Default is 8888, or the next available port

|

||||

|

||||

### 3. Access the Web Interface

|

||||

|

||||

Open your browser and navigate to:

|

||||

```

|

||||

http://localhost:8888/api/

|

||||

```

|

||||

|

||||

The web interface provides:

|

||||

- Real-time configuration management

|

||||

- Database statistics dashboard

|

||||

- Auth rules management

|

||||

- Secure admin authentication with your Nostr identity

|

||||

|

||||

### 4. Test Your Relay

|

||||

|

||||

Test basic connectivity:

|

||||

```bash

|

||||

# Test WebSocket connection

|

||||

curl -H "Accept: application/nostr+json" http://localhost:8888

|

||||

|

||||

# Test with a Nostr client

|

||||

# Add ws://localhost:8888 to your client's relay list

|

||||

```

|

||||

|

||||

### 5. Configure Your Relay (Optional)

|

||||

|

||||

Use the web interface or send admin commands to customize:

|

||||

- Relay name and description

|

||||

- Authentication rules (whitelist/blacklist)

|

||||

- Connection limits

|

||||

- Proof-of-work requirements

|

||||

|

||||

**That's it!** Your relay is now running with zero configuration required. The event-based configuration system means you can adjust all settings through the web interface or admin API without editing config files.

|

||||

|

||||

|

||||

## Web Admin Interface

|

||||

|

||||

C-Relay includes a **built-in web-based administration interface** accessible at `http://localhost:8888/api/`. The interface provides:

|

||||

|

||||

- **Real-time Configuration Management**: View and edit all relay settings through a web UI

|

||||

- **Database Statistics Dashboard**: Monitor event counts, storage usage, and performance metrics

|

||||

- **Auth Rules Management**: Configure whitelist/blacklist rules for pubkeys

|

||||

- **NIP-42 Authentication**: Secure access using your Nostr identity

|

||||

- **Event-Based Updates**: All changes are applied as cryptographically signed Nostr events

|

||||

|

||||

The web interface serves embedded static files with no external dependencies and includes proper CORS headers for browser compatibility.

|

||||

|

||||

|

||||

## Administrator API

|

||||

|

||||

C-Relay uses an innovative **event-based administration system** where all configuration and management commands are sent as signed Nostr events using the admin private key generated during first startup. All admin commands use **NIP-44 encrypted command arrays** for security and compatibility.

|

||||

|

||||

### Authentication

|

||||

|

||||

@@ -32,7 +108,7 @@ All admin commands require signing with the admin private key displayed during f

|

||||

|

||||

### Event Structure

|

||||

|

||||

All admin commands use the same unified event structure with tag-based parameters:

|

||||

All admin commands use the same unified event structure with NIP-44 encrypted content:

|

||||

|

||||

**Admin Command Event:**

|

||||

```json

|

||||

@@ -41,14 +117,16 @@ All admin commands use the same unified event structure with tag-based parameter

|

||||

"pubkey": "admin_public_key",

|

||||

"created_at": 1234567890,

|

||||

"kind": 23456,

|

||||

"content": "<nip44 encrypted command>",

|

||||

"content": "AqHBUgcM7dXFYLQuDVzGwMST1G8jtWYyVvYxXhVGEu4nAb4LVw...",

|

||||

"tags": [

|

||||

["p", "relay_public_key"],

|

||||

["p", "relay_public_key"]

|

||||

],

|

||||

"sig": "event_signature"

|

||||

}

|

||||

```

|

||||

|

||||

The `content` field contains a NIP-44 encrypted JSON array representing the command.

|

||||

|

||||

**Admin Response Event:**

|

||||

```json

|

||||

["EVENT", "temp_sub_id", {

|

||||

@@ -56,7 +134,7 @@ All admin commands use the same unified event structure with tag-based parameter

|

||||

"pubkey": "relay_public_key",

|

||||

"created_at": 1234567890,

|

||||

"kind": 23457,

|

||||

"content": "<nip44 encrypted response>",

|

||||

"content": "BpKCVhfN8eYtRmPqSvWxZnMkL2gHjUiOp3rTyEwQaS5dFg...",

|

||||

"tags": [

|

||||

["p", "admin_public_key"]

|

||||

],

|

||||

@@ -64,30 +142,44 @@ All admin commands use the same unified event structure with tag-based parameter

|

||||

}]

|

||||

```

|

||||

|

||||

The `content` field contains a NIP-44 encrypted JSON response object.

|

||||

|

||||

### Admin Commands

|

||||

|

||||

All commands are sent as nip44 encrypted content. The following table lists all available commands:

|

||||

All commands are sent as NIP-44 encrypted JSON arrays in the event content. The following table lists all available commands:

|

||||

|

||||

| Command Type | Tag Format | Description |

|

||||

|--------------|------------|-------------|

|

||||

| Command Type | Command Format | Description |

|

||||

|--------------|----------------|-------------|

|

||||

| **Configuration Management** |

|

||||

| `config_update` | `["relay_description", "My Relay"]` | Update relay configuration parameters |

|

||||

| `config_query` | `["config_query", "list_all_keys"]` | List all available configuration keys |

|

||||

| `config_update` | `["config_update", [{"key": "auth_enabled", "value": "true", "data_type": "boolean", "category": "auth"}, {"key": "relay_description", "value": "My Relay", "data_type": "string", "category": "relay"}, ...]]` | Update relay configuration parameters (supports multiple updates) |

|

||||

| `config_query` | `["config_query", "all"]` | Query all configuration parameters |

|

||||

| **Auth Rules Management** |

|

||||

| `auth_add_blacklist` | `["blacklist", "pubkey", "abc123..."]` | Add pubkey to blacklist |

|

||||

| `auth_add_whitelist` | `["whitelist", "pubkey", "def456..."]` | Add pubkey to whitelist |

|

||||

| `auth_delete_rule` | `["delete_auth_rule", "blacklist", "pubkey", "abc123..."]` | Delete specific auth rule |

|

||||

| `auth_query_all` | `["auth_query", "all"]` | Query all auth rules |

|

||||

| `auth_query_type` | `["auth_query", "whitelist"]` | Query specific rule type |

|

||||

| `auth_query_pattern` | `["auth_query", "pattern", "abc123..."]` | Query specific pattern |

|

||||

| **System Commands** |

|

||||

| `system_clear_auth` | `["system_command", "clear_all_auth_rules"]` | Clear all auth rules |

|

||||

| `system_status` | `["system_command", "system_status"]` | Get system status |

|

||||

| `stats_query` | `["stats_query"]` | Get comprehensive database statistics |

|

||||

| **Database Queries** |

|

||||